How racial, gender, and socioeconomic biases creep into medical AI – and what it means for your health

A Fatal Blind Spot

In 2022, a 28-year-old Black woman in Atlanta visited an urgent care clinic for chest pain. The AI system screening her symptoms dismissed her as “low risk” for a heart attack. Hours later, she collapsed from a massive coronary event.

This wasn’t an isolated incident. A Lancet Digital Health audit revealed that 81% of cardiac AI tools perform worse for women and people of color – a disparity traced to their training on predominantly white, male medical data.

The Dirty Secret of Medical AI

Three pervasive biases plague diagnostic algorithms:

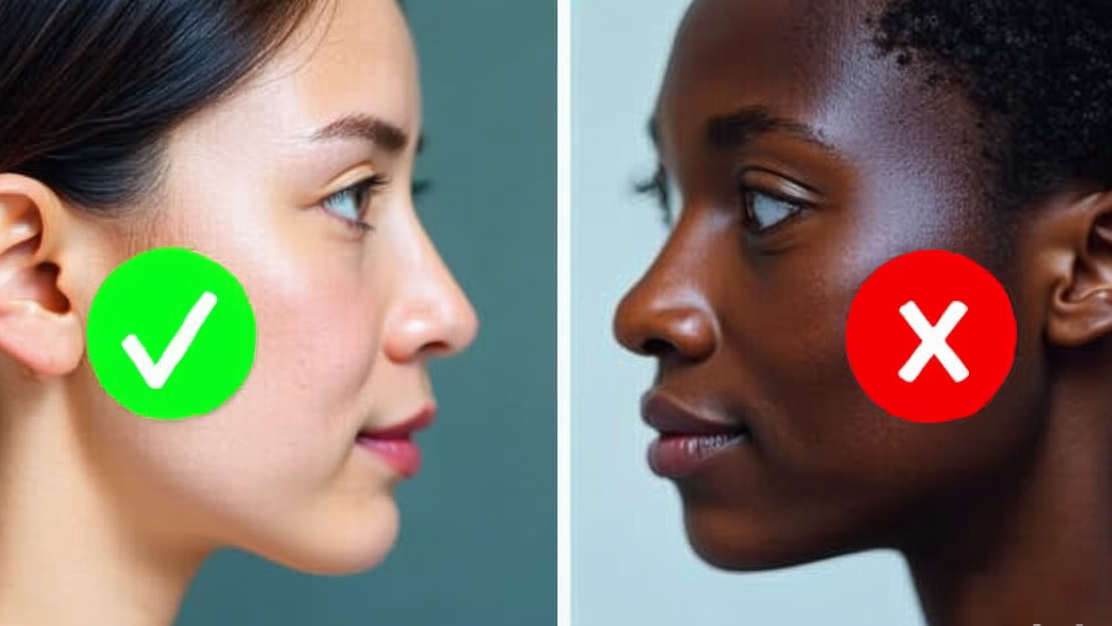

1. The Skin Tone Gap

- Dermatology AIs achieve 95% accuracy diagnosing melanoma – but only for fair skin

- 42% error rate for dark skin conditions (vs. 8% for light skin) – NIH 2023 study

- Reason: 94% of training images came from Caucasian patients

2. The “Invisible Woman” Effect

- Heart attack prediction AIs miss 34% more cases in women

- Trained primarily on male symptoms (crushing chest pain), ignoring female presentations (fatigue, jaw pain)

3. The Poverty Penalty

- AI models predicting diabetes risk overdiagnose wealthy urban patients by 22%

- Rural/low-income patients get flagged later – their data is scarcer in training sets

How Hospitals Enable the Problem

Leaked emails from a major health system show administrators rushing biased AI into production:

*”Per CEO directive: Launch the sepsis AI by Q3 despite the minority performance gap. We’ll ‘address disparities’ post-release.”*

Meanwhile, patients are rarely told when AI is used – let alone about its limitations.

Fighting Back Against Algorithmic Bias

For Patients:

- Ask: “Was AI involved in my diagnosis? What populations was it trained on?”

- Demand alternative screening if you’re in an under-represented group

- Report suspected bias to the FDA’s AI incident database

For Providers:

- Johns Hopkins’ new Bias Checklist for vetting medical AI

- MIT’s “Diverse Data Pledge” – 40 hospitals committing to inclusive training sets

The Bigger Picture

This isn’t just about technology – it’s about which lives our healthcare values most. As AI reshapes medicine, we must choose: Will it magnify our existing biases, or help us overcome them?

Tomorrow in Part 3: Who’s selling your medical scans? The $12B shadow market in AI training data.